Neuromorphic Processing is the Future of AI

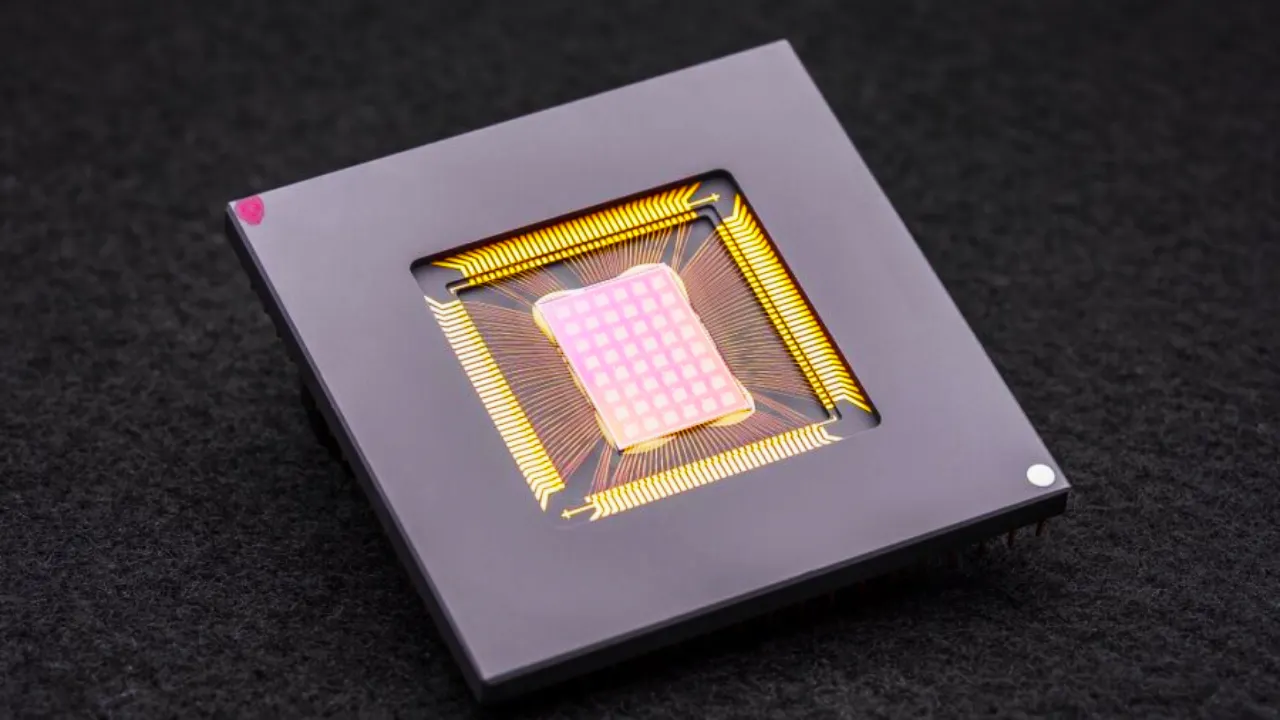

Neuromorphic computing is a type of computing in which artificial neural networks are utilized to process information in a manner comparable to that of the human brain.

Although this technology is still in its early stages, it has the potential to transform the way computers are used and possibly be used to create more lifelike artificial intelligence (AI).

Pros and cons of neuromorphic computing

There are some pros and cons associated with neuromorphic computing. Some of the pros include:

1. The ability to process information in a more natural and human-like manner. This could lead to better AI with more human-like cognitive processes.

2. When compared to traditional computers, there is the possibility for extremely fast computing. This is due to the fact that neural networks may process information in parallel.

3. The ability to consume less energy than traditional computers. This is a result of the fact that neural networks utilise resources more effectively.

Some of the cons associated with neuromorphic computing include:

1. The technology is still in its infancy and has yet to be proven. There’s a chance it won’t live up to the hype.

2. It is challenging to create and train neural networks. This could result in significant development costs for neuromorphic computing systems.

Future of neuromorphic computing

The future of neuromorphic computing is full of promise, but it is also packed with challenges. The technology allows for the development of powerful artificial intelligence (AI) systems that can more closely approximate the processes of the human brain. However, developing these systems will necessitate a better understanding of how the brain functions and how to effectively simulate its functions on a computer.

However, this is only the beginning. Neuromorphic computing has a wide range of potential applications. These systems could be used in the future to create more sophisticated AI systems for everything from autonomous vehicles to medical diagnosis.

However, significant obstacles must be overcome before neuromorphic computing can reach its full potential. Understanding how the brain works is one challenge. Despite decades of research, scientists still do not have a complete understanding of how the brain works. This makes creating effective computer simulations of the brain difficult.

Another challenge is developing algorithms that can learn from data. Current AI systems are frequently restricted to learning from very clean and well-labeled data sets. However, in the real world, data is frequently illegible and unlabeled. This poses a challenge for neuromorphic systems, which must learn from data in the same way that humans do.

Despite these obstacles, the future of neuromorphic computing looks bright. The potential applications of this technology will only grow as researchers continue to develop more sophisticated AI systems.

Applications of neuromorphic computing

This technology can be used for various applications, such as pattern recognition, image processing, and signal processing.

These are possible applications of neuromorphic computing, but actual applications may become more spatialized or widespread. We can only speculate for the time being.

- Medical diagnosis is one potential application for neuromorphic computing. This technology, for example, can be used to detect cancerous cells in a patient’s body. Neuromorphic computing can also be used to track the progress of patients suffering from Alzheimer’s disease and other forms of dementia.

- Robotics is another application of neuromorphic computing. This technology has the potential to be used to control robotic devices such as prosthetic limbs and unmanned vehicles. Neuromorphic computing can also be used to create robots that are smarter and more efficient than current models.

- Finally, neuromorphic computing can be used to improve security. This technology can be used to build more secure systems against hacking and other forms of cybercrime. Improved biometric security systems can also benefit from neuromorphic computing.