5 Local LLM With Longest Context Length

5 Local LLMs With Massive Context Length (Late 2025)

Running long context locally faces a hard physical limit: VRAM. However, raw context length is meaningless if the model is stupid. A bad model with a 100k context window will simply hallucinate or forget the middle of the document. It is significantly better to run a smart model with a moderate 32k-64k window than a dumb model with “infinite” context that can’t reason over it.

Here are the top 5 performers as of November 2025 that balance intelligence, context size, and hardware reality.

1. Granite 4.0 H-Small (32B / 9B Active)

The RAM Efficiency King

- Context Limit: 128k+ (Effectively Infinite for local use)

- Architecture: Hybrid Mamba-2 + Transformer

- VRAM Requirement: ~20GB (Runs full 128k context on a single 3090/4090)

Why it’s here: This is the most important model for local users with limited hardware. Because it uses Mamba-2 (State Space Model) layers rather than pure Transformers, its memory footprint for context is constant. You can feed it a 500-page PDF, and it will use roughly the same amount of RAM as a short sentence. If you have a single GPU and need to process massive documents, this is the only viable option.

- Best For: Summarizing massive reports, RAG (Retrieval Augmented Generation) on 24GB cards.

2. Qwen 3 (14B – 30B MoE)

The Reasoning Powerhouse

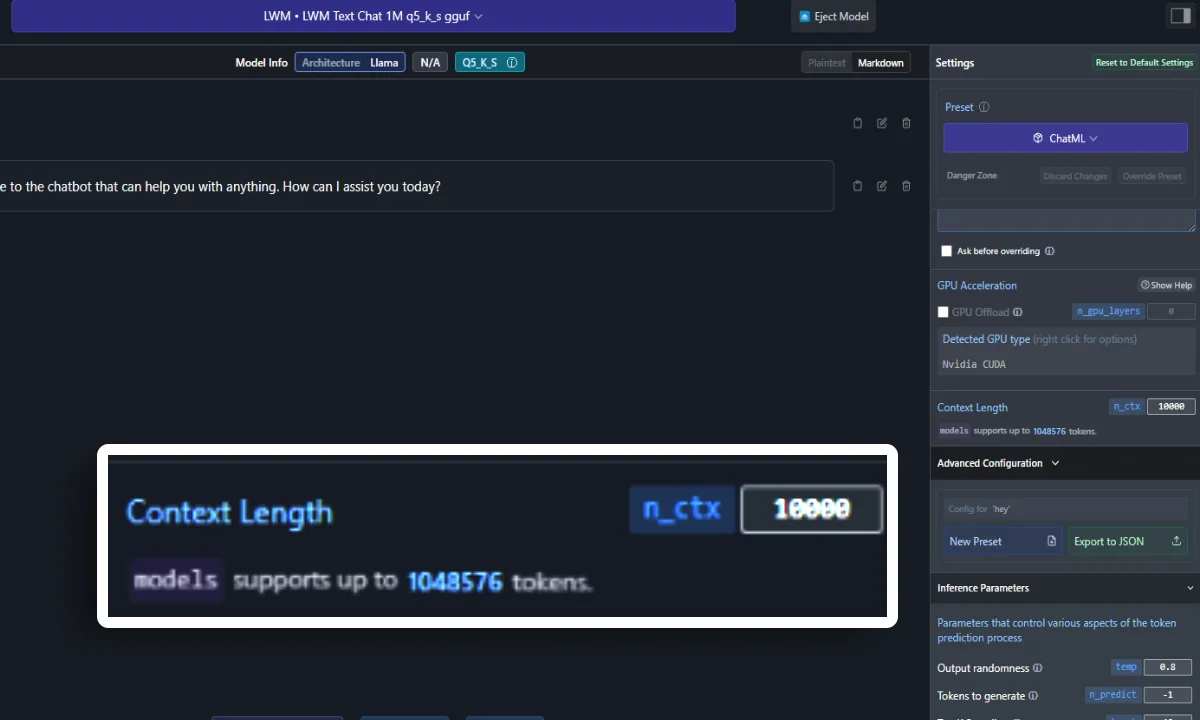

- Context Limit: 256k Native (Extendable to 1M)

- Architecture: Dense & Mixture of Experts (MoE)

- VRAM Requirement: ~12GB (14B) to ~24GB+ (30B MoE)

Why it’s here: Qwen 3 offers the best balance of reasoning and context in the 7B-30B range.

- 14B Dense: Excellent for 24GB cards. Supports 128k context comfortably with Q4 quantization.

- 30B MoE (3.3B Active): The star of the show. It activates only ~3.3B parameters per token but carries the knowledge of a 30B model. This makes it surprisingly fast while maintaining high logic scores across long documents.

- Best For: Repository-level coding tasks and complex logic puzzles where understanding the whole context matters.

3. Gemma 3 27B (Google)

The Balanced Multimodal Option

- Context Limit: 128k

- Architecture: Dense Transformer (Multimodal)

- VRAM Requirement: ~24GB (Q4 Quant fits tight on a 3090/4090)

Why it’s here: Gemma 3 27B is Google’s open-weight answer to the mid-sized category. It features a native 128k context window and is surprisingly effective at “needle in a haystack” retrieval tasks. Unlike the others, it has strong native multimodal support (images + text) within that context window.

- Best For: Analyzing mixed documents (text + images) or general purpose chat with long history.

4. Command R7B (12-2024 Update)

The RAG Specialist

- Context Limit: 128k

- Architecture: Transformer (Optimized for Retrieval)

- VRAM Requirement: ~16GB (Very accessible)

Why it’s here: Cohere’s Command series is trained specifically for RAG and tool use. The 7B variant (updated Dec 2024) punches way above its weight class for citing sources and finding “needles in a haystack.” It doesn’t hallucinate citation sources as often as other small models.

- Best For: Building a local search engine or “Chat with PDF” tool on mid-range hardware (RTX 4070/3080).

5. Mistral 3.1 (24B)

The Balanced Middleweight

- Context Limit: 128k

- Architecture: Dense Transformer

- VRAM Requirement: 24GB (Tight fit with context)

Why it’s here: Mistral occupies the awkward but necessary middle ground between the lightweight 7B models and the heavy 30B+ models. The 24B size is the maximum you can squeeze into a consumer GPU (RTX 3090/4090) while maintaining high intelligence. It handles 32k-64k context reasonably well before VRAM becomes a bottleneck.

- Best For: General purpose assistant that is smarter than Command R7B but faster than Qwen 30B.