14 Best Software for Running local LLM

Ever been writing with an AI, feeling like a genius, then… bam! Internet cuts and your work vanishes? Cloud-based AI is neat, but a bit unreliable. Here’s the awesome news: Local LLM interfaces are here to save the day!

Think of them as tricked-out workshops for your AI projects. You get complete control, privacy, and ditch the internet need – it’s total AI freedom. Imagine cooking up mind-blowing text stuff, getting answers that would shock even the smartest people, all without anyone spying (or needing Wi-Fi!).

Get ready, because we’re about to take a deep dive into the world of local LLM interfaces. We’ll show you the coolest options, explain their features in an easy way, and show you how they can totally level up your language skills. Researcher, developer, or just a word-loving fan? This is your chance to become a local LLM master!

Related: Top 7 Best 13B LLM Model

Interface for Running local LLM

Jan

| Feature | Description |

|---|---|

| Open-source | Jan is an open-source, self-hosted alternative to ChatGPT. |

| Offline Functionality | Runs 100% offline on your computer. |

| Enhanced Productivity | Offers customizable AI assistants, global hotkeys, and in-line AI. |

| Privacy and Security | Conversations, preferences, and model usage are secure, exportable, and deletable on your device. |

| OpenAI Compatibility | Provides an OpenAI-equivalent API server for use with compatible apps. |

Related: 3 Open Source LLM With Longest Context Length

Jan is an open-source, self-hosted alternative to ChatGPT, designed to run 100% offline on your computer. It offers enhanced productivity through customizable AI assistants, global hotkeys, and in-line AI features. Prioritizing offline and local-first operations, Jan ensures that conversations, preferences, and model usage remain secure, exportable, and deletable on your device. Additionally, it is OpenAI compatible, providing an OpenAI-equivalent API server that functions as a drop-in replacement with compatible apps

Ava

| Feature | Description |

|---|---|

| Open-Source | Ava is an open-source application, allowing users to freely access and modify its source code. |

| Local Language Models | It runs advanced language models locally on your computer, ensuring privacy and control. |

| Privacy-Centric | Designed with privacy in mind, Ava ensures that all data remains on your device without being shared online. |

| Multiple Language Tasks | Offers a variety of language tasks such as text generation, grammar correction, rephrasing, summarization, and data extraction. |

| Model Freedom | Users have the freedom to use any model, including uncensored ones, without limitations. |

| User-Friendly | Combines power, privacy, and simplicity into one sleek application, making it accessible for all users. |

Ava is a cutting-edge open-source desktop application designed to run advanced language models locally on your computer. It offers a suite of language tasks such as text generation, grammar correction, rephrasing, summarization, and data extraction. With Ava, you have the freedom to use any model, including uncensored ones, without limitations. Privacy is a core feature of Ava, ensuring that all your data remains on your device, never shared online. Ava combines power, privacy, and simplicity into one sleek application, making it an ideal choice for anyone looking to harness the capabilities of language models without compromising on privacy.

Faraday.dev

| Rating | Key Features | Suitable for |

|---|---|---|

| 5/5 | – Offline operation | – Users without coding knowledge |

| – Local storage of AI models | – Privacy-conscious users | |

| – Character Creator for personalized AI | – AI enthusiasts | |

| – Cross-platform support (Mac & Windows) | – Offline use |

Faraday.dev is an exceptional LLM (Language Model) interface that offers seamless and hassle-free user experience. With a simple one-click Desktop installer, it enables users to start chatting immediately without the need for coding knowledge. What sets Faraday apart is that it operates entirely offline, making it perfect for situations without internet access, such as during travel.

A key advantage of Faraday is its local storage of AI models, ensuring utmost privacy and security. Users can rest assured that their characters’ behavior remains unchanged, and access to data cannot be revoked or manipulated by any external entity. Moreover, all chat data is saved locally, never sent to remote servers, enhancing data privacy.

One of the standout features of Faraday is the “Character Creator,” empowering users to craft personalized AI characters for various roles like assistants, teachers, therapists, and more. Furthermore, the platform provides access to a rich hub of free character downloads, fostering a dynamic community.

Available for both Mac and Windows, Faraday.dev promises a robust and private environment for users to explore the potential of LLM while enjoying the convenience of offline access.

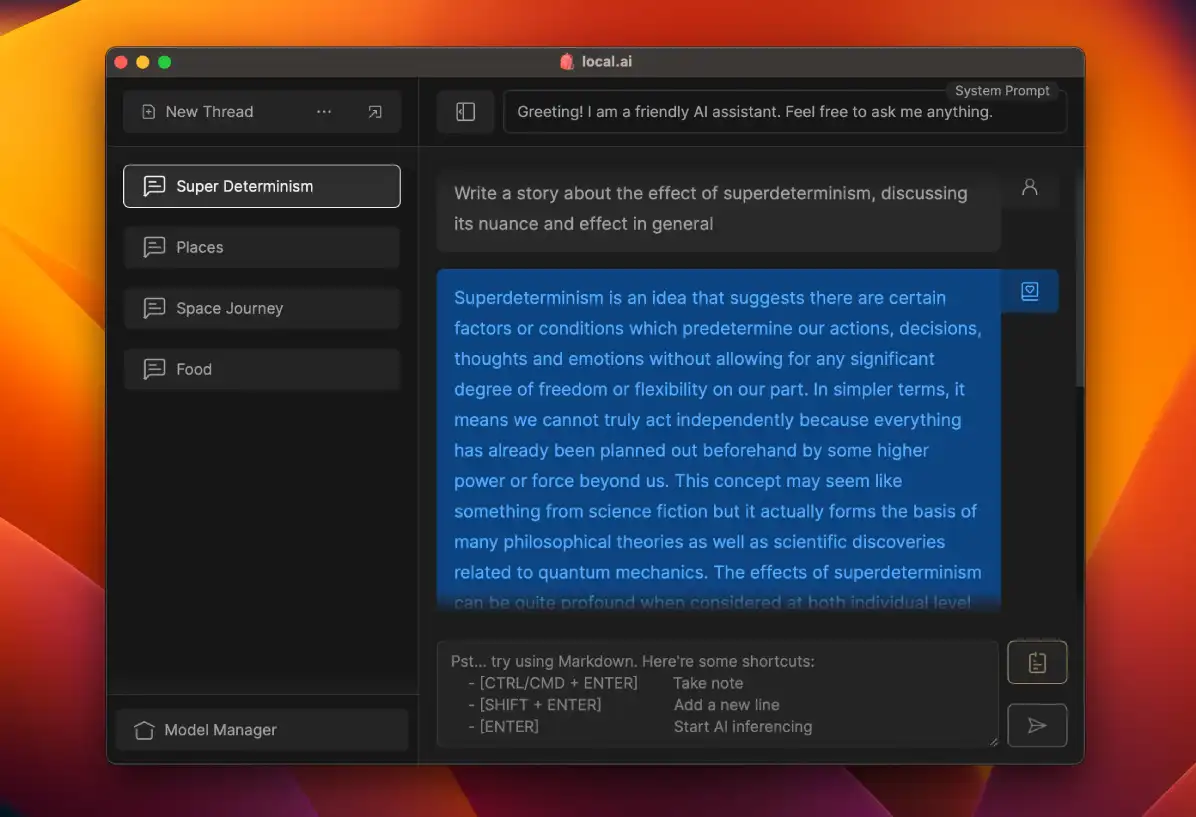

local.ai

| Rating | Key Features | Suitable for |

|---|---|---|

| 4/5 | – Open-source nature | – Users wanting customization |

| – Efficient memory utilization | – Cross-platform users | |

| – Compatibility with various LLM formats | – AI researchers |

local.ai is a top-notch interface and user-friendly application designed specifically for running local open-source Large Language Models (LLMs). With its intuitive interface and streamlined user experience, local.ai simplifies the entire process of experimenting with AI models locally.

One of the standout features of local.ai is its open-source nature, allowing users to freely access and modify the software to suit their specific needs. Powered by a robust Rust backend, local.ai ensures efficient memory utilization and a compact footprint, optimizing performance for a seamless user experience.

local.ai provides broad compatibility, supporting multiple platforms including Linux, Windows, and Mac operating systems. It also offers comprehensive support for various LLM formats such as llama.cpp, mtp, and others, enabling users to seamlessly work with different model types.

Whether you’re a seasoned AI researcher or a developer looking to harness the power of local LLMs, local.ai provides the perfect solution with its exceptional interface, cross-platform support, and compatibility with various LLM formats.

Msty

Msty is a fairly easy-to-use software for running LM locally. The UI feels modern and easy to use, and the setup is also straightforward. Just download the setup file and it will complete the installation, allowing you to use the software. As far as I know, this uses Ollama to perform local LLM inference. Like other software where you download models from Huggingface in GGUF format, this is the same. Download any model in GGUF format, and you can potentially use that in this software.

Some of the quality of life features include Split Chats, Edit Conversation, excellent chat organization, and the ability to change model settings.

Sanctum

Sanctum is another software where you can download and use models in GGUF format. An interesting feature is that it supports PD and documentation files. You can ask questions about them, and all of this is done locally. The UI is good, and while it doesn’t have many additional features, it works great for normal chat tasks.

OobaBogga Web UI

| Rating | Key Features | Suitable for |

|---|---|---|

| 4.5/5 | – Versatile interface with multiple modes | – Users who need flexibility |

| – Support for various model backends | – Handling diverse data | |

| – CPU mode for transformers models | – Optimized performance | |

| – API with websocket streaming endpoints | – Real-time applications |

The OobaBogga Web UI is a highly versatile interface for running local large language models (LLMs). It offers a wide range of features and is compatible with Linux, Windows, and Mac. With three interface modes (default, notebook, and chat) and support for multiple model backends (including tranformers, llama.cpp, AutoGPTQ, GPTQ-for-LLaMa, RWKV, FlexGen), it provides flexibility and convenience.

Key Features:

- Dropdown menu for easy switching between different models.

- LoRA for loading and unloading models on the fly, including the ability to load multiple models simultaneously and train new models.

- Precise instruction templates for chat mode, facilitating conversations with various LLMs.

- Multimodal pipelines, such as LLaVA and MiniGPT-4, enabling the integration of diverse data formats and modalities.

- Efficient text streaming and markdown output with LaTeX rendering.

- CPU mode for transformers models and DeepSpeed ZeRO-3 inference for optimized performance.

- Extensions for customization, including custom chat characters.

- API with websocket streaming endpoints for real-time interactive applications.

LLM as a Chatbot Service

| Rating | Key Features | Suitable for |

|---|---|---|

| 4/5 | – Model-agnostic conversation library | – Users needing chatbots |

| – User-friendly design | – Model compatibility | |

| – Context management techniques | – Fast generation |

The LLM Interface offers a convenient way to access multiple open-source, fine-tuned Large Language Models (LLMs) as a chatbot service. It provides a model-agnostic conversation and context management library called Ping Pong. The user interface, GradioChat, resembles HuggingChat and is built with Gradio. The interface supports various LLM model types and implements efficient context management techniques. It keeps short prompts for faster generation and retains a limited number of past conversations. Enhancements such as summarization and information extraction are planned for future updates. With its user-friendly design and broad model compatibility, the LLM Interface is a powerful tool for leveraging local LLM models.

GPT4All

| Rating | Key Features | Suitable for |

|---|---|---|

| 4.5/5 | – No need for GPU or internet connection | – Accessibility |

| – Versatile assistant for various tasks | – Writing, coding, analysis | |

| – Supports all major model types | – Text generation, translation, sentiment analysis |

GPT4All is a user-friendly and privacy-aware LLM (Large Language Model) Interface designed for local use. This free-to-use interface operates without the need for a GPU or an internet connection, making it highly accessible.

With GPT4All, you have a versatile assistant at your disposal. It can assist you in various tasks, including writing emails, creating stories, composing blogs, and even helping with coding. Additionally, GPT4All has the ability to analyze your documents and provide relevant answers to your queries.

Installation of GPT4All is a breeze, as it is compatible with Windows, Linux, and Mac operating systems. Regardless of your preferred platform, you can seamlessly integrate this interface into your workflow.

GPT4All supports all major model types, ensuring a wide range of pre-trained models to choose from. This allows you to utilize the power of large language models for tasks such as text generation, language translation, and sentiment analysis.

In summary, GPT4All is an efficient LLM Interface that brings the power of large language models to your local machine. With its simplicity, versatile capabilities, and support for multiple model types, it is an invaluable tool for various writing and coding tasks.

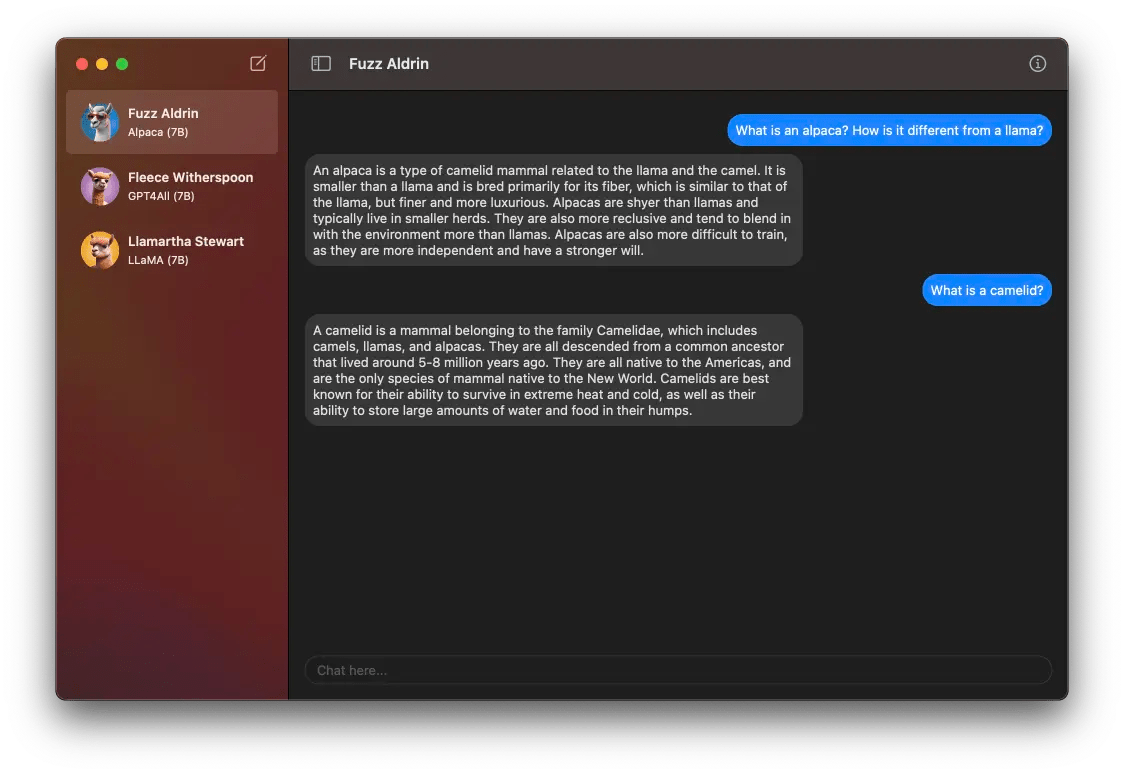

LlamaChat

| Rating | Key Features | Suitable for |

|---|---|---|

| 4.5/5 | – Exclusively for Mac users | – Mac users |

| – Integration with open-source libraries | – Secure conversations | |

| – Chat with multiple models on Mac | – AI-driven conversations |

LlamaChat is a powerful local LLM AI interface exclusively designed for Mac users. With LlamaChat, you can effortlessly chat with LLaMa, Alpaca, and GPT4All models running directly on your Mac. Importing model checkpoints and .ggml files is a breeze, thanks to its seamless integration with open-source libraries like llama.cpp and llama.swift. As an open-source and free solution, LlamaChat prioritizes your privacy and ensures that your conversations remain secure. Join the Mac community and experience captivating AI-driven conversations with LlamaChat today.

Note: LlamaChat is exclusively available for Mac users.

LM Studio

| Rating | Key Features | Suitable for |

|---|---|---|

| 4/5 | – Easy LLM operation on your laptop | – Beginners and experts |

| – Discover and download compatible GGML models | – Language processing tasks | |

| – Simple and efficient experience | – Exploring different models |

LM Studio is a user-friendly interface that allows you to run LLMs (Large Language Models) on your laptop offline. With no complex setup required, LM Studio makes it easy for both beginners and experienced users to utilize LLMs. You can discover new LLMs on the app’s homepage and download compatible GGML model files from HuggingFace repositories. LM Studio offers a simple and efficient experience similar to ChatGPT UI. It’s a convenient tool for exploring different models and enhancing language processing tasks on your local machine.

LOCALAI

LocalAI is a versatile and efficient drop-in replacement REST API designed specifically for local inferencing with large language models (LLMs). It offers seamless compatibility with OpenAI API specifications, allowing you to run LLMs locally or on-premises using consumer-grade hardware. What sets LocalAI apart is its support for multiple model families, all of which are compatible with the ggml format. The best part? You don’t need a GPU to utilize its capabilities.

One of LocalAI’s standout features is its ability to operate without an internet connection. Once you load the models for the first time, they remain cached in memory, ensuring faster inference times for subsequent requests. By leveraging C++ bindings instead of shelling out, LocalAI delivers remarkably fast inference and exceptional performance.

In summary, LocalAI provides a streamlined and efficient solution for running LLMs locally, enabling you to leverage their power and versatility without the need for a GPU. With its support for various model families, offline functionality, and optimized performance, LocalAI is a valuable tool for anyone seeking local inferencing capabilities.

LoLLMS Web UI

Introducing LoLLMS WebUI (Lord of Large Language Models: One tool to rule them all), your user-friendly interface for accessing and utilizing LLM (Large Language Model) models. With LoLLMS WebUI, you can enhance your writing, coding, data organization, image generation, and more.

Choose your preferred binding, model, and personality to customize your tasks. Whether it’s improving your emails, essays, or debugging code, LoLLMS WebUI has got you covered.

Explore a wide range of functionalities, including searching, data organization, and image generation, all within an easy-to-use UI. The interface offers both light and dark mode options for your convenience.

Integration with GitHub repository allows for seamless access to your projects, making collaboration a breeze. Select from predefined personalities that come with welcome messages, adding a touch of uniqueness to your interactions.

Provide feedback on generated answers with a thumb up/down rating system. You can also copy, edit, and remove messages to have full control over your discussions.

LoLLMS WebUI ensures your discussions are stored in a local database for easy retrieval. You can search, export, and delete multiple discussions effortlessly.

Whether you prefer Docker, conda, or manual virtual environment setups, LoLLMS WebUI supports them all, ensuring compatibility with your preferred development environment.

Experience the power of large language models with LoLLMS WebUI. Get ready to enhance your productivity with this comprehensive and intuitive interface.

Anything LLM

Over chatbots that lock you in? AnythingLLM lets you choose the LLM that fits you best, from big names like GPT-4 to brewing your own custom model.

No more file format hassles! PDFs, Word docs, it can handle them all. And that’s not all. You can personalize its look and unleash your inner developer with a full API.

Need complete control over your local LLMs? Privacy a top concern? AnythingLLM is your ultimate business intelligence partner for your AI journey. It’s more than just a chatbot, it’s a revolution in running local LLMs.

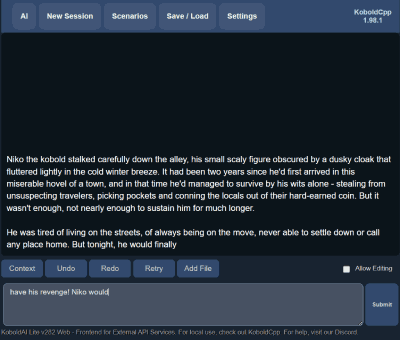

koboldcpp

KoboldCpp is a remarkable interface developed by Concedo, designed to facilitate the utilization of llama.cpp function bindings through a simulated Kobold API endpoint. This innovative interface brings together the versatility of llama.cpp and the convenience of a user-friendly graphical user interface (GUI).

With KoboldCpp, you gain access to a wealth of features and tools that enhance your experience in running local LLM (Language Model) applications. From persistent stories and efficient editing tools to flexible save formats and convenient memory management, KoboldCpp has it all. The interface provides an all-inclusive package, offering seamless integration with the renowned Kobold and Kobold Lite frameworks.

What sets KoboldCpp apart is its compact size, weighing in at a mere 20 MB (excluding model weights). Despite its small footprint, this interface packs a powerful punch, providing a comprehensive solution for running local LLM applications. Whether you’re an aspiring author, a game developer, or a language enthusiast, KoboldCpp empowers you to explore the potential of LLM technology effortlessly.

Experience the simplicity and power of KoboldCpp as it opens up a world of possibilities for unleashing the full potential of llama.cpp in your projects.