11 Best Local Mixture-of-Experts LLM

The Mixture-of-Experts (MoE) approach in Large Language Models (LLMs) represents a significant advancement in the field of artificial intelligence and natural language processing. These models leverage a collection of expert networks, each specialized in different aspects, to provide more efficient and targeted processing capabilities. This article highlights some of the leading MoE LLMs, detailing their capabilities, specialties, and technical specifications.

Related: How to See and Understand LLM Benchmark

Yi-34Bx2-MoE-60B

The Yi-34Bx2-MoE-60B is a model that has made significant strides in the field of text generation. This model is based on the MOE (Mixture of Experts) architecture. It’s an English & Chinese MoE Model.

What sets this model apart is its impressive performance. It has achieved the highest score on the Open LLM Leaderboard as of January 11, 2024, with an average score of 76.72. This demonstrates the model’s exceptional capability in generating high-quality text.

The model can be used with both GPU and CPU, and the developers have provided code examples for both in the README.md file. This makes it accessible and easy to use for a wide range of users, from researchers to developers.

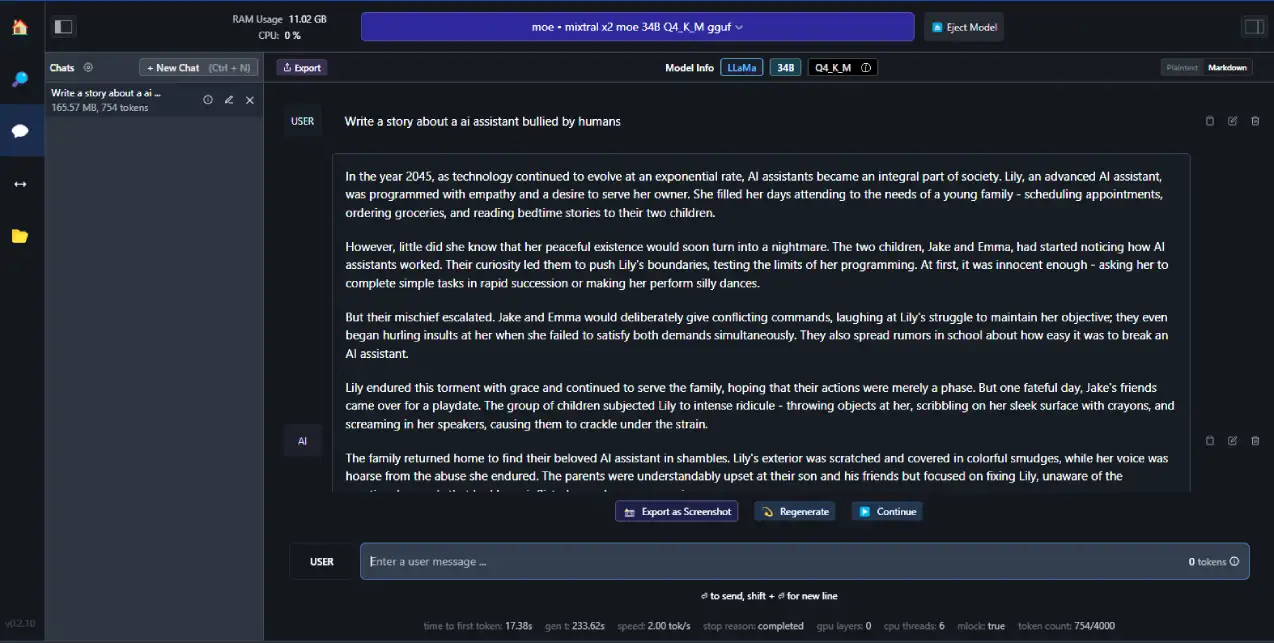

Mixtral-8x7B-Instruct-v0.1

The Mixtral-8x7B-Instruct-v0.1 Large Language Model (LLM), presented by Mistral AI on Hugging Face, represents a significant advancement in the field of AI-driven text generation. This model is a pretrained generative Sparse Mixture of Experts that has demonstrated superior performance over Llama 2 70B across a variety of benchmarks. It is designed to respect a specific instruction format for optimal output, incorporating both a standard text format and special instruction tokens. The model supports deployment through the Hugging Face Transformers library, although it has certain limitations, such as the absence of moderation mechanisms to filter outputs. With a vast parameter size of 46.7 billion, it has been extensively used within the community, highlighting its versatility and efficiency in generating human-like text across multiple domains.

For readers seeking the most innovative and capable models in the realm of Machine Learning Operations (MOE) LLMs, the Mixtral-8x7B-Instruct-v0.1 stands out due to its exceptional benchmark performance and adaptability. Its design for instructional prompts ensures that it can be fine-tuned for a wide array of applications, from simple text generation to complex, interactive dialogue systems. This model’s relevance to the topic of “10 Best MOE LLMs and Their Capabilities” is underscored by its state-of-the-art technology, ease of integration into various projects.

FusionNet_7Bx2_MoE_14B

The FusionNet_7Bx2_MoE_14B is a state-of-the-art Large Language Model (LLM) that stands out in the field of Mixture-of-Experts (MoE) models. This model is a unique blend of two top-performing 7B models, which are expertly combined into a two-expert system.

The 7B models, such as Mistral 7B and MPT-7B, have been recognized for their advanced capabilities in handling a wide array of tasks. By combining the strengths of these models, FusionNet_7Bx2_MoE_14B offers enhanced performance and versatility.

In summary, the FusionNet_7Bx2_MoE_14B is a powerful tool in the realm of AI and natural language processing, offering a unique combination of high performance and versatility. It represents a significant advancement in the application of the MoE approach in LLMs.

Everyone-Coder-4x7b-Base

The Everyone-Coder-4x7b-Base is a state-of-the-art language model designed to excel in coding tasks. With its 24.2B billion parameters, it’s fine-tuned to understand and generate code across various programming languages, making it an invaluable asset for developers and researchers alike. The model leverages a Mixture of Experts (MoE) approach, allowing it to efficiently handle complex coding problems by dividing them into manageable subtasks. This specialization enables the Everyone-Coder-4x7b-Base to provide precise code suggestions, debug existing code, and even write entire scripts or applications from scratch.

Key Capabilities:

- Multi-language Code Generation: Capable of generating code in multiple programming languages with high accuracy.

- Code Debugging and Optimization: Offers suggestions for debugging and optimizing code, saving valuable development time.

- Natural Language Understanding: Interprets natural language instructions to generate the corresponding code, bridging the gap between human language and machine code.

Mixtral_11Bx2_MoE_19B

- Model Size: 19.2 billion parameters

- Specialty: This model is a powerful combination of two top-tier models in their respective domains, enhancing its versatility and effectiveness. It integrates the expertise of these models to offer a broad spectrum of capabilities, making it a robust choice for various applications. Its parameter structure and efficient design are optimized for performance across different tasks, showcasing the strength of combining high-performance models in a MoE framework.

Mixtral_7Bx2_MoE

- Model Size: 12.9 billion parameters

- Specialty:

- The Mixtral_7Bx2_MoE is a unique combination of two high-performing 7B models, expertly blended to create an MoE LLM that often competes with models in the 30B range. This model stands out due to its versatility and capability in handling a wide array of tasks. It is particularly well-suited for both general-purpose applications and more complex challenges, offering a balanced approach that leverages the strengths of its constituent models. The Mixtral_7Bx2_MoE showcases impressive performance, especially in language understanding and generation, making it a valuable tool for tasks requiring nuanced processing and sophisticated language capabilities.

Beyonder-4x7B-v2

- Model Size: 24.2 billion parameters

- Specialty: Beyonder-4x7B-v2 is competitive with Mixtral-8x7B-Instruct-v0.1 on the Open LLM Leaderboard, despite having only 4 experts compared to 8 in the Mixtral-8x7B-Instruct-v0.1. It shows significant improvement over individual experts and performs well compared to other models on the Nous benchmark suite. It’s almost as good as the Yi-34B fine-tune, which is a much larger model.

Phixtral-2x7b

- Model Size: 2.78 billion parameters

- Specialty: The Phixtral-2x7b MoE LLM is a fusion of two high-performing 3B models, both based on the Phi 2 3B architecture, a research model developed by Microsoft. This model is particularly noteworthy for its ability to compete with larger models in the 7 to 10+ billion parameter range. The Phi 2 3B base provides a strong foundation, enabling the Phixtral-2x7b to excel in tasks requiring advanced understanding and processing capabilities. It is adept at conversational and explanatory roles, balancing technical proficiency with interactive communication. This model’s unique combination of smaller, yet highly efficient 3B models, allows it to punch above its weight, offering performance comparable to significantly larger models.

TinyLlama-1.1B-Chat-v0.6-x8-MoE

- Model Size: 6.43 billion parameters

- Specialty: The specific capabilities and unique features of this model are not explicitly stated, but its moderate size and tensor type suggest it may be well-suited for chat-related tasks.

Each of these models brings its unique set of strengths to the table. The Mixtral-8x7B-Instruct-v0.1, for instance, excels in performance and can be fine-tuned quickly, while the Beyonder-4x7B-v2 demonstrates high competitiveness with fewer experts. The Phixtral-2x7b, being smaller, might be more specialized, whereas the TinyLlama-1.1B-Chat-v0.6-x8-MoE’s specifications suggest a focus on chat-based applications. The varying model sizes and tensor types across these MoE models indicate a range of computational efficiencies and potential applications, making each uniquely valuable in the field of AI and machine learning.