17 Best Local Vision LLM (Open Source)

In the digital age, where information streams through visual channels as much as ever, understanding and interacting with visual data has become critical. This is where Local Vision Large Language Models (LLMs) step in, revolutionizing how we process and generate visual information.

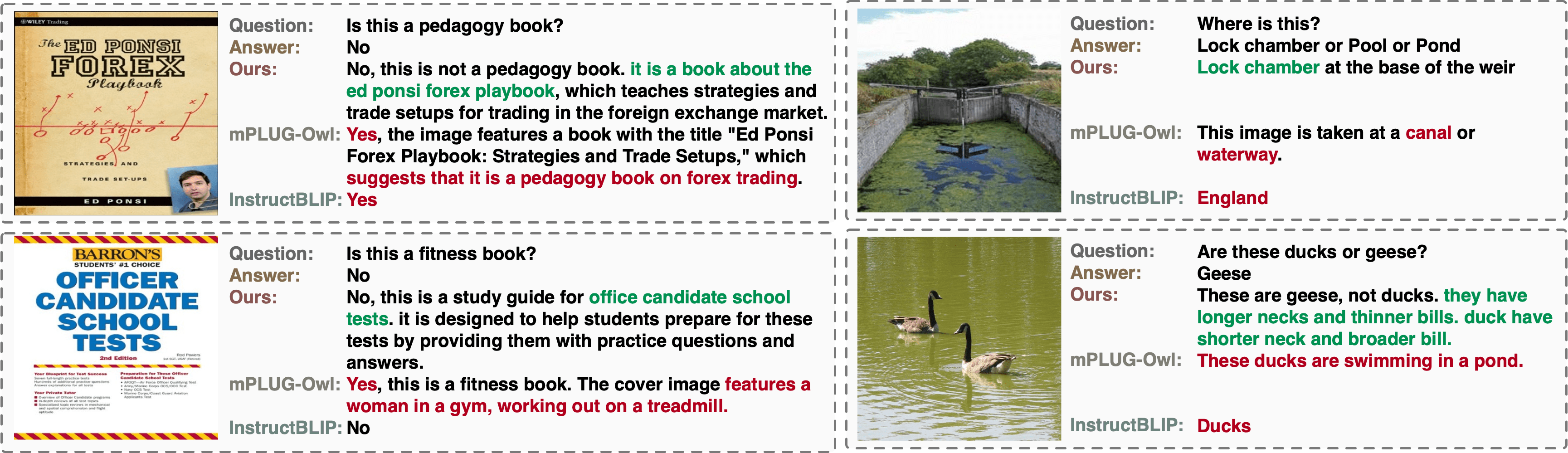

This article delves into the exciting world of Best Local Vision LLMs (Open Source), highlighting their capabilities and potential to unlock groundbreaking applications. We’ll explore how these AI-powered models excel at tasks like image captioning, visual question answering, and even creative text-image composition.

But what makes Open Source LLMs so special? Openness breeds innovation, allowing researchers and developers to tinker, analyze, and build upon these models, accelerating advancements in the field.

Deepseek-vl-7b-chat

DeepSeek-VL-7B-Chat is a vision-language model that can understand images and text. It has 7 billion parameters and can process images up to 1024×1024 resolution, which is one of the highest among multimodal models. It is based on DeepSeek-LLM-7B-Chat, a large language model that can handle both English and Chinese. DeepSeek-VL-7B-Chat is fine-tuned on extra instruction data, which makes it more suitable for chat scenarios.

Some of the features of DeepSeek-VL-7B-Chat are:

- It can generate captions, questions, and answers for images, as well as compose text and images creatively.

- It can handle various types of images, such as natural scenes, web pages, diagrams, formulas, and scientific literature.

- It can interact with users through natural language instructions, and customize the output according to the user’s preferences.

- It can be easily integrated with other applications, such as chatbots, search engines, and social media platforms.

If you want to try DeepSeek-VL-7B-Chat, you can visit its Hugging Face page, where you can find the model card, the download link, and some examples of how to use it.

internlm-xcomposer2-7b

InternLM-XComposer2-7B is a vision-language large model (VLLM) based on InternLM2 for advanced text-image comprehension and composition.

It is released in two versions:

- InternLM-XComposer2-VL: The pretrained VLLM model with InternLM2 as the initialization of the LLM, achieving strong performance on various multimodal benchmarks.

- InternLM-XComposer2: The finetuned VLLM for Free-from Interleaved Text-Image Composition.

This model goes beyond conventional vision-language understanding, adeptly crafting interleaved text-image content from diverse inputs like outlines, detailed textual specifications, and reference images, enabling highly customizable content creation. It’s fascinating to watch them chew through the tough stalks and leaves, and then lick their lips in satisfaction.

MoE-LLaVA

MoE-LLaVA is a groundbreaking framework for building large vision-language models that leverages a “mixture-of-experts” approach to achieve remarkable performance with fewer parameters. This approach essentially involves dividing the model into smaller, specialized experts, each擅长处理特定类型的任务。这种方式不仅可以减少模型参数的数量,而且还可以提高模型的效率和可扩展性。

Here are some key highlights of MoE-LLaVA:

- High performance with fewer parameters: MoE-LLaVA can achieve performance comparable to other large LLMs with significantly fewer parameters, making it more efficient and cost-effective to train and run.

- Effectiveness on various tasks: MoE-LLaVA has shown impressive results on a variety of visual understanding tasks, including image captioning, visual question answering, and object detection.

- Reduced hallucinations: MoE-LLaVA’s architecture helps mitigate the issue of hallucinations, where the model generates unrealistic or inaccurate outputs.

- Relatively simple training: Compared to other large LLMs, MoE-LLaVA requires less training time and resources, making it more accessible to a wider range of researchers and developers.

moondream2

moondream2 is a small vision language model designed to run efficiently on edge devices. It has 1.6 billion parameters and is built using SigLIP, Phi-1.5 and the LLaVA training dataset. It can perform various vision and language tasks, such as image captioning, visual question answering, and image retrieval. It achieves impressive results on several benchmarks, such as 74.2% on VQAv2, 58.5% on GQA, and 36.4% on TextVQA.

Moondream1

Moondream1 is a compact yet powerful visual Large Language Model (LLM). Despite being a 1.6 billion parameter model, it is capable of performing tasks usually reserved for larger models.

The model was built by @vikhyatk using a combination of SigLIP, Phi-1.5, and the LLaVa training dataset. SigLIP is a simple pairwise Sigmoid loss for Language-Image Pre-training. Phi-1.5 is a Transformer with 1.3 billion parameters that was trained using the same data sources as phi-1, augmented with a new data source that consists of various NLP synthetic texts. The LLaVa training dataset is a collection of multimodal instruction-following examples generated by interacting with GPT-4.

Moondream1 is capable of describing images, scenarios, and visual contexts. This makes it particularly useful for tasks such as image recognition and description, scenario analysis, and context understanding. Despite its relatively small size, Moondream1 can even run on devices with low resources, making it a versatile tool for a variety of applications.

llava-v1.6-mistral-7b

LLaVA-v1.6-Mistral-7B is an open-source chatbot model developed by Liu Haotian. It’s a part of the LLaVa-NeXT series, which is known for its advanced capabilities in multimodal instruction-following tasks.

It was trained by fine-tuning the base model, Mistral-7B-Instruct-v0.2, on multimodal instruction-following data. The model was trained in December 2023.

The training dataset includes 558K filtered image-text pairs from LAION/CC/SBU, captioned by BLIP, 158K GPT-generated multimodal instruction-following data, 500K academic-task-oriented VQA data mixture, 50K GPT-4V data mixture, and 40K ShareGPT data.

The evaluation dataset is a collection of 12 benchmarks, including 5 academic VQA benchmarks and 7 recent benchmarks specifically proposed for instruction-following LMMs.

LLaVA-v1.6-Mistral-7B is a perfect fit for the article “Best Local Vision LLM (Open Source)” due to its open-source nature and its advanced capabilities in local vision tasks. It’s a state-of-the-art model that combines a vision encoder and Vicuna for general-purpose visual and language understanding. Its impressive chat capabilities mimic the spirits of the multimodal GPT-4, setting a new state-of-the-art accuracy on Science QA. This makes it an excellent choice for readers interested in cutting-edge open-source models for local vision tasks.

llava 1.5

LLaVA-1.5 is an open-source chatbot model developed by Liu Haotian. It’s a part of the LLaVA series, which is known for its advanced capabilities in multimodal instruction-following tasks. This series includes many models in various sizes, catering to different computational needs and use-cases.

LLaVA-1.5 is an auto-regressive language model based on the transformer architecture. It was trained by fine-tuning LLaMA/Vicuna on GPT-generated multimodal instruction-following data. The model was trained in September 2023.

Training Dataset

The training dataset includes 558K filtered image-text pairs from LAION/CC/SBU, captioned by BLIP, 158K GPT-generated multimodal instruction-following data, 450K academic-task-oriented VQA data mixture, and 40K ShareGPT data.

The evaluation dataset is a collection of 12 benchmarks, including 5 academic VQA benchmarks and 7 recent benchmarks specifically proposed for instruction-following LMMs.

Why it fits for the article

LLaVA-1.5 is a perfect fit for the article “Best Local Vision LLM (Open Source)” due to its open-source nature and its advanced capabilities in local vision tasks. It’s a state-of-the-art model that combines a vision encoder and Vicuna for general-purpose visual and language understanding. Its impressive chat capabilities mimic the spirits of the multimodal GPT-4, setting a new state-of-the-art accuracy on Science QA. This makes it an excellent choice for readers interested in cutting-edge open-source models for local vision tasks.

Emu2

| Feature | Description |

|---|---|

| Multimodal response generation | It can generate coherent and diverse responses based on the textual and visual context of the conversation. |

| Image captioning, text-to-image generation, and image blending | It can perform these tasks using the Emu2 model. |

| Unified multimodal framework | It can handle multiple modalities such as text, image, audio, and video in a unified framework. |

| Domain adaptation | It can adapt to different domains and scenarios by fine-tuning the Emu2 model on specific datasets. |

Emu2-Chat is a multimodal chatbot that leverages the power of Emu2, a large multimodal model (LMM) trained with a unified autoregressive objective. Emu2-Chat can generate natural and engaging responses based on both textual and visual inputs, as well as perform various tasks such as image captioning, text-to-image generation, and image blending. Emu2-Chat is available on Hugging Face, where you can also find the pretrained and instruction-tuned weights of Emu2. Emu2-Chat is a great choice for developers and researchers who want to explore the possibilities of multimodal communication and interaction. Emu2-Chat is based on the paper “Generative Pretraining in Multimodality” by Sun et al.

Nous-Hermes 2 Vision Alpha

| Feature | Description |

|---|---|

| Model Type | Vision-Language Model |

| Inspiration | Based on OpenHermes-2.5-Mistral-7B by teknium |

| Encoder Integration | Incorporates SigLIP-400M, replacing traditional 3B vision encoders |

| Architecture | Lighter architecture for enhanced performance |

| Training Data | Custom dataset with 220K images from LVIS-INSTRUCT4V, 60K from ShareGPT4V, 150K private function calling data, 50K conversations from OpenHermes-2.5 |

| Functionality | Vision-Language Action Model for diverse automation applications |

The “Nous-Hermes-2-Vision-Alpha” model from NousResearch is an advanced Vision-Language Model inspired by the OpenHermes-2.5-Mistral-7B by teknium. Key features include the integration of SigLIP-400M, replacing traditional 3B vision encoders for improved performance and a lighter architecture. The model is also unique for its training data, which includes a custom dataset enriched with function calling, transforming it into a Vision-Language Action Model. The training dataset comprises 220K images from LVIS-INSTRUCT4V, 60K from ShareGPT4V, 150K private function calling data, and 50K conversations from teknium’s OpenHermes-2.5. It is designed for versatile automation applications.

Fuyu-8B

| Feature | Description |

|---|---|

| Size | Compact and efficient |

| Datasets | – Multimodal model available on HuggingFace |

| Architecture | Simplified design for ease of understanding |

| Use Cases | – Digital agents |

| – Arbitrary image resolutions | |

| – Graph and diagram questions | |

| – UI-based queries | |

| – Fine-grained screen image localization | |

| Speed | Responses for large images in <100 milliseconds |

| Performance | Excellent results in image understanding tasks |

Fuyu-8B is a remarkable local vision language model (LLM) available on HuggingFace. Its strength lies in its simplicity, versatility, and speed.

Key Features:

- Simplified Architecture: Fuyu-8B offers a straightforward architecture and training process, making it easy to understand and deploy.

- Digital Agent-Focused: Designed for digital agents, it excels at handling various tasks, including image analysis, answering questions about graphs and diagrams, and fine-grained screen image localization.

- Speed: Fuyu-8B provides rapid responses, processing large images in under 100 milliseconds.

- Strong Performance: It performs exceptionally well in standard image understanding tasks like visual question-answering and image captioning.

Fuyu-8B is an excellent choice for developers and researchers seeking a versatile and efficient LLM for image-related tasks. Its availability on HuggingFace makes it accessible to a wide audience, promising exciting possibilities for various applications.

IDEFICS 8 to 80b

| Key Features | Fit For |

|---|---|

| Multimodal understanding | Language generation |

| Uses publicly accessible data and models | Answering questions related to images |

| Two versions: 9 billion and 80 billion parameters | Describing visual content |

| Versatile in various domains | Crafting narratives from images |

| High-performance on image-text benchmarks | Pure language model |

IDEFICS (Image-aware Decoder Enhanced à la Flamingo with Interleaved Cross-attentionS) is an innovative open-access visual language model that brings the power of multimodal understanding to the realm of language generation. This model is a derivation of Flamingo, an advanced visual language model developed by DeepMind that had previously been withheld from public release. Similar in design to GPT-4, IDEFICS is designed to seamlessly process combinations of image and text inputs, culminating in the production of coherent textual outputs.

What sets IDEFICS apart is its exclusive reliance on publicly accessible data and models, particularly LLaMA v1 and OpenCLIP. This approach ensures its accessibility and adaptability, further augmented by its two distinct versions: the base variant and the instructed variant. These variants come in sizes of either 9 billion or 80 billion parameters, respectively, offering varying degrees of complexity and accuracy.

The versatility of IDEFICS is worth emphasizing. It can excel in multiple domains, ranging from answering questions related to images and describing visual content, to fabricating narratives that are deeply rooted in multiple images. It even possesses the capacity to function as a pure language model without the inclusion of visual data.

Crucially, IDEFICS exhibits performance at par with its original closed-source predecessor on a range of image-text benchmarks. This includes tasks such as visual question answering, both open-ended and multiple choice, crafting descriptive captions for images, and even classifying images within the context of few-shot learning scenarios. With its two available parameter sizes, 9 billion and 80 billion, IDEFICS caters to diverse needs while maintaining its proficiency in bridging the gap between language and imagery.

Lynx

| Key Features | Fit For |

|---|---|

| 20+ variants exploring multimodal capabilities | Sophisticated comprehension tasks |

| Focus on network structures, model designs, training data impact, and prompt diversity | Effective instruction-following |

| Expansive evaluation set for image and video tasks | High-performance comprehension |

| Vision-language synergy through dedicated encoder | Vision and language processing |

| “Prefix-finetuning” structure for text generation | Aligning text with input instructions |

Lynx is a cutting-edge local multimodal language model designed for sophisticated comprehension and generation tasks. It introduces a diverse array of over 20 meticulously crafted variants, each exploring distinct aspects of multimodal capabilities.

In-depth Exploration: Lynx’s development focuses on network structures, model designs, training data impact, and prompt diversity. These controlled settings facilitate refined understanding and effective instruction-following.

Comprehensive Evaluation: Lynx’s innovation lies in its creation of an expansive evaluation set, covering image and video tasks via crowd-sourced input. This set benchmarks the model’s performance comprehensively.

Performance Excellence: Lynx emerges as a pinnacle of accuracy in understanding and generation, surpassing GPT-4 style models. Its prowess in processing vision and language concurrently sets new standards.

Vision-Language Synergy: Lynx harmonizes vision and instruction tokens through a dedicated vision encoder, followed by concatenated processing for task execution.

Elegant Architecture: Lynx’s hallmark is the “prefix-finetuning” (PT) structure, simplifying text generation aligned with input instructions through decoder-only LLMs.

Cheetor

| Key Features | Fit For |

|---|---|

| Process intricate vision-language instructions | Reasoning across complex scenarios |

| Interleaved Vision-Language Context | Diverse and intricate instruction formats |

| Wide array of instruction-following scenarios | Real-world applications |

| Multi-modal conversations with humans | Comprehending unconventional visual elements |

Cheetor, an advanced local multimodal language model, showcases remarkable capabilities in processing intricate vision-language instructions. It excels in reasoning across complex scenarios where images and text intertwine seamlessly. In scenario (a), Cheetor’s adeptness shines as it discerns intricate connections within images, unveiling the underlying causes of unusual occurrences. Similarly, in (b) and (c), Cheetor displays its capacity to deduce relationships among images, adeptly grasping the metaphorical nuances they convey. Impressively, in (e) and (f), Cheetor engages in multi-modal conversations with humans, displaying an unparalleled aptitude for comprehending even the most unconventional visual elements.

Cheetor’s prowess can be attributed to three key attributes:

- Interleaved Vision-Language Context: Cheetor seamlessly integrates both images and text within its instructions. Whether it’s unraveling storyboards accompanied by scripts or deciphering diagrams in textbooks, Cheetor navigates diverse visual-textual sequences with ease.

- Diverse Complex Instruction Formats: The model’s versatility shines through a wide spectrum of instructions. From predicting dialogue for comics to detecting disparities in surveillance images and tackling conversational embodied tasks, Cheetor effortlessly handles varied and intricate tasks.

- Wide Array of Instruction-Following Scenarios: Cheetor’s capabilities span across numerous real-world applications. Its expertise encompasses domains like cartoons, industrial images, and driving recordings, making it adaptable to an extensive range of scenarios.

LLaVA 13B & 7B

| Key Features | Fit For |

|---|---|

| Simple visual reasoning tasks | Research purposes |

| Primarily designed for research | Computer vision and NLP enthusiasts |

| Surprising performance in complex tasks | Exploring boundaries of multimodal models |

LLaVA 13B, 7B is an open-source chatbot and multimodal language model. Trained on GPT-generated multimodal data, it excels in simple visual reasoning tasks. While its performance may not be outstanding in complex visual reasoning, LLaVA 13B and 7B still surprises users in various ways. It is primarily designed for research purposes, catering to computer vision, natural language processing, and AI enthusiasts. For more information, visit https://llava-vl.github.io/. Questions and comments can be directed to the GitHub repository at https://github.com/haotian-liu/LLaVA/issues.

While LLaVA 7B may not excel in handling complex visual reasoning tasks, it still manages to surprise users with its ability to tackle certain challenging scenarios. Its performance in these complex tasks might exceed expectations, making it an intriguing choice for those looking to explore the boundaries of multimodal models.

MiniGPT-4 7B & 13B

MiniGPT-4 7B and 13B are two powerful multimodal language models designed to understand both text and visual information. With 7 billion and 13 billion parameters respectively, these models leverage the capabilities of MiniGPT-4, which combines a frozen visual encoder with a frozen LLM called Vicuna, using a single projection layer.

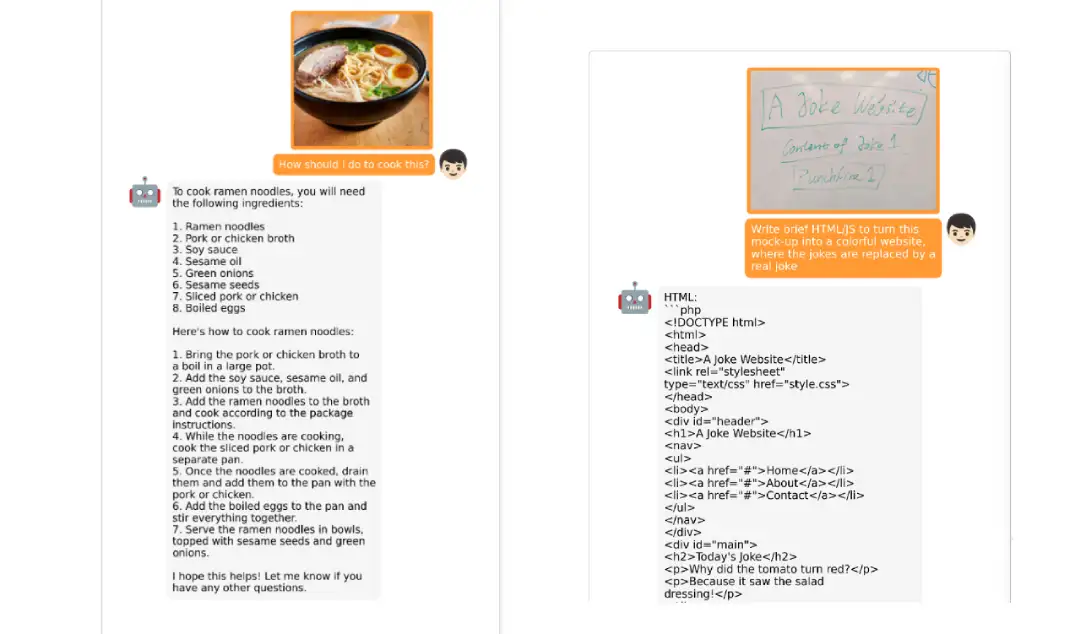

These models exhibit numerous capabilities similar to those of GPT-4, such as generating detailed descriptions for images and creating websites based on hand-written drafts. In addition to these features, MiniGPT-4 demonstrates emerging capabilities like crafting stories and poems inspired by given images, providing solutions to visual problems, and even offering cooking instructions based on food photos.

MiniGPT-4, when aligned with Vicuna-7B, offers a pretrained version that requires as low as 12GB of GPU memory for demonstration. While excelling in simple visual reasoning tasks, it’s important to note that these models may not perform as well on complex visual tasks. Nevertheless, they can still surprise users with their ability to tackle certain complex challenges.

OpenFlamingo v2

OpenFlamingo v2 is an advanced Local Multimodal (LLM) model that excels in processing combined sequences of images and text to generate meaningful textual output. By effectively handling interleaved examples, this model demonstrates its proficiency in various tasks such as captioning, visual question answering, and image classification.

Building upon the Flamingo modeling paradigm, OpenFlamingo v2 enhances the layers of a pre-trained language model, enabling them to leverage visual features during the decoding process. Following this approach, the vision encoder and language model are frozen, while the connecting modules are trained using image-text sequences obtained from web scraping. A combination of LAION-2B and Multimodal C4 serves as the basis for training.

OpenFlamingo v2 is a versatile LLM model capable of performing an array of functions. It can accurately count objects within an image, read and comprehend text, provide comprehensive explanations for images, and much more. With its multimodal capabilities, this model goes beyond traditional text-focused models, enabling it to understand and process visual information effectively.

Otter-9B

Otter-9B is an impressive Local Multimodal (LLM) model that takes the concept of understanding beyond text to a whole new level. With Otter v0.1, it introduces the groundbreaking support for multiple image inputs as in-context examples, making it the first multimodal instruction tuned model to organize inputs in this manner.

Building upon this achievement, Otter v0.2 expands its capabilities further by supporting video inputs, where frames are arranged similarly to Flamingo’s original implementation. Moreover, it continues to embrace multiple image inputs, leveraging them as in-context examples for each other. This enhanced flexibility and integration of visual elements empower Otter-9B to understand daily scenes, reason in context, spot differences in observations, and act as an egocentric assistant.

One of the standout features of Otter-9B is its multilingual support. In addition to English, it also caters to a global audience by offering compatibility with Chinese, Korean, Japanese, German, French, Spanish, and Arabic languages. This inclusive approach enables a larger user base to benefit from the convenience brought about by advancements in artificial intelligence, fostering engagement and accessibility across diverse cultures.